Safeguarding the Future: Why Data Security is Non-Negotiable in the GenAI Era

In the GenAI Wild West, Your Data's the Gold Rush

In 2025, Generative AI (GenAI) isn’t just a buzzword—it’s the engine powering everything from personalized customer experiences to automated code generation. According to industry insights, GenAI adoption has surged, with organizations processing unprecedented volumes of data to train models and generate outputs. But this innovation comes with a stark reality: the very tools accelerating business growth are also amplifying data security risks. A single breach could expose sensitive customer information, intellectual property, or proprietary algorithms, leading to financial losses, regulatory fines, and eroded trust. As we navigate this AI-driven landscape, robust data security isn’t optional—it’s the foundation of sustainable innovation.

In this post, we’ll explore why data security has become mission-critical amid GenAI’s rise and how Microsoft Purview stands out as a comprehensive solution to mitigate these threats. Whether you’re a CISO grappling with compliance or a developer integrating AI tools, understanding these dynamics can help you protect your organization’s most valuable asset: its data.

The GenAI Boom: Innovation at the Cost of Vulnerability

Generative AI thrives on data—vast datasets that include everything from customer records to internal documents. By 2025, experts predict that GenAI will significantly amplify existing safety and security risks, making it more likely for threats like data exfiltration or manipulation to occur at scale. Here’s why data security is more urgent than ever:

1. Expanded Attack Surfaces

GenAI models ingest and output massive amounts of information, often in real-time. This creates new entry points for cybercriminals. For instance, adversarial attacks like prompt injection—where malicious inputs trick the AI into revealing confidential data—have become commonplace. Without proper safeguards, a seemingly innocuous query could leak sensitive details, turning your AI assistant into an unwitting informant.

2. Regulatory and Compliance Pressures

With regulations like GDPR, CCPA, and emerging AI-specific laws tightening in 2025, organizations face steeper penalties for mishandling data. GenAI exacerbates this: models trained on unvetted data can inadvertently violate privacy rules, leading to audits and fines that dwarf traditional breach costs. As one report notes, ensuring robust AI data security is paramount for protecting user information from cyber threats and complying with evolving standards.

3. Insider and Supply Chain Risks

GenAI democratizes access to powerful tools, but it also heightens insider threats. Employees experimenting with AI might accidentally (or intentionally) expose data through shared prompts or generated outputs. Meanwhile, third-party AI vendors introduce supply chain vulnerabilities, where tainted training data could propagate biases or backdoors.

The stats are sobering: AI systems now collect and store enormous personal datasets, making privacy a top concern in the digital age. In short, ignoring data security isn’t just risky—it’s a recipe for obsolescence in an AI-first world.

Enter Microsoft Purview: Your Unified Shield for AI-Era Data Protection

Microsoft Purview isn’t a band-aid solution; it’s a holistic platform for data governance, risk management, and compliance, purpose-built for the complexities of modern environments—including multicloud setups, SaaS apps, and on-premises stores. At its core, Purview unifies security across structured and unstructured data, helping organizations discover, classify, and protect assets while fueling safe AI innovation.

What sets Purview apart? It’s designed with GenAI in mind, offering integrated tools that go beyond reactive defenses to proactive risk mitigation. Let’s break down how it addresses the unique challenges of GenAI.

How Microsoft Purview Empowers Secure GenAI Adoption

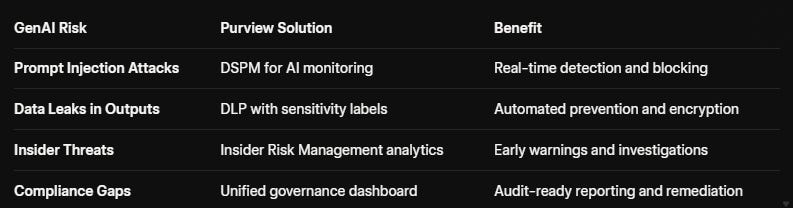

Purview’s suite of features provides end-to-end visibility and control, ensuring your data remains secure even as AI scales. Here’s a closer look at its GenAI-specific capabilities:

1. Data Security Posture Management (DSPM) for AI

This is Purview’s powerhouse for AI environments. DSPM offers a centralized dashboard to monitor AI interactions in real-time, flagging risks like unauthorized data access or anomalous prompts. For example, it scans GenAI usage across users, apps, and data sources, identifying attempts to manipulate systems or share sensitive information. Security teams gain instant visibility into high-risk behaviors, enabling quick remediation without disrupting workflows. In 2025, this feature has evolved to support proactive monitoring of AI apps, helping organizations secure data before it fuels a breach.

2. Information Protection and Data Loss Prevention (DLP)

Purview automatically classifies sensitive data using AI-driven labeling, then applies DLP policies to prevent leaks across endpoints, browsers, and cloud services. For GenAI, this means blocking prompts that could expose protected info or encrypting outputs to maintain compliance. Imagine training a model on customer data—Purview ensures only vetted subsets are used, reducing the risk of IP theft or regulatory violations.

3. Insider Risk Management and Compliance Tools

GenAI amplifies insider threats, but Purview’s analytics detect subtle patterns, such as unusual data exports via AI tools. Integrated with Communication Compliance, it scans for regulatory red flags (e.g., SEC violations in financial chats) and generates audit-ready reports. Plus, the built-in Copilot for Purview uses natural-language queries to simplify investigations—ask “Show me AI-related data risks from last quarter,” and get actionable insights instantly.

4. Seamless Integration for Fast-Track Security

Deploying GenAI shouldn’t mean starting from scratch. Purview integrates natively with Azure AI, Microsoft 365, and third-party ecosystems, allowing you to govern data across your entire estate. Recent enhancements, like pay-as-you-go pricing for AI-driven protections, make it scalable for enterprises of all sizes. As Infosys demonstrated, Purview’s SDK strengthens GenAI data security by providing granular controls over usage and access.

By leveraging these tools, organizations can mitigate AI-specific risks—like data poisoning or hallucination-induced errors—while complying with global standards. The result? Faster innovation with fewer headaches.

Charting a Secure Path Forward

As GenAI reshapes industries, the organizations that thrive will be those that treat data security as a strategic enabler, not a compliance checkbox. Microsoft Purview bridges this gap, offering the visibility, controls, and intelligence needed to harness AI’s potential securely.

Ready to fortify your data estate? Explore Microsoft Purview today and discover how it can transform your GenAI journey from risky to resilient. What AI security challenges are you facing? Share in the comments below—I’d love to hear your thoughts.